I’ve seen a lot of sentiment around Lemmy that AI is “useless”. I think this tends to stem from the fact that AI has not delivered on, well, anything the capitalists that push it have promised it would. That is to say, it has failed to meaningfully replace workers with a less expensive solution - AI that actually attempts to replace people’s jobs are incredibly expensive (and environmentally irresponsible) and they simply lie and say it’s not. It’s subsidized by that sweet sweet VC capital so they can keep the lie up. And I say attempt because AI is truly horrible at actually replacing people. It’s going to make mistakes and while everybody’s been trying real hard to make it less wrong, it’s just never gonna be “smart” enough to not have a human reviewing its’ behavior. Then you’ve got AI being shoehorned into every little thing that really, REALLY doesn’t need it. I’d say that AI is useless.

But AIs have been very useful to me. For one thing, they’re much better at googling than I am. They save me time by summarizing articles to just give me the broad strokes, and I can decide whether I want to go into the details from there. They’re also good idea generators - I’ve used them in creative writing just to explore things like “how might this story go?” or “what are interesting ways to describe this?”. I never really use what comes out of them verbatim - whether image or text - but it’s a good way to explore and seeing things expressed in ways you never would’ve thought of (and also the juxtaposition of seeing it next to very obvious expressions) tends to push your mind into new directions.

Lastly, I don’t know if it’s just because there’s an abundance of Japanese language learning content online, but GPT 4o has been incredibly useful in learning Japanese. I can ask it things like “how would a native speaker express X?” And it would give me some good answers that even my Japanese teacher agreed with. It can also give some incredibly accurate breakdowns of grammar. I’ve tried with less popular languages like Filipino and it just isn’t the same, but as far as Japanese goes it’s like having a tutor on standby 24/7. In fact, that’s exactly how I’ve been using it - I have it grade my own translations and give feedback on what could’ve been said more naturally.

All this to say, AI when used as a tool, rather than a dystopic stand-in for a human, can be a very useful one. So, what are some use cases you guys have where AI actually is pretty useful?

It’s perfect for topics you have professional knowledge of but don’t have perfect recall for. It can bring forward the context you need to be refreshed on but you can fact check it because you are an expert in that field.

If you need boilerplate code for a project but don’t remember a specific library or built in function that tackles your problem, you can use AI to generate an example you can then fix to make it run the way you wanted.

Same thing with finding config examples for a program that isn’t well documented but you are familiar with.

Sorry all my examples are tech nerd stuff because I’m just another tech nerd on lemmy

On the inverse I’ve found it to be quite bad at that. I can generally count on the AI answer to be wrong, fundamentally.

Might depend on your industry. It’s garbage at g code.

It probably depends how many good examples it has to pull together from stack overflow etc. it’s usually fine writing python, JavaScript, or powershell but I’d say if you have any level of specific needs it will just hallucinate a fake module or library that is a couple words from your prompt put into a function name but it’s usually good enough for me to get started to either write my own code or gives me enough context that I can google what the actual module is and find some real documentation. Useful to subject matter experts if there is enough training data would be my new qualifier.

AI is really good as a starting point for literally any document, report, or email that you have to write. Put in as detailed of a prompt as you can, describing content, style, and length and cut out 2/3 or more of your work. You’ll need to edit it - somewhat heavily, probably - but it gives you the structure and baseline.

This is my one of 2 use cases for AI. I only recently found out after a life of being told I’m terrible at writing, that I’m actually really good at technical writing. Things like guides, manuals, etc that are quite literal and don’t have any soul or personality. This means I’m awful at writing things directed at people like emails and such. So AI gives me a platform where I can enter in exactly what I want to say and tell it to rewrite it in a specific tone or level of professionalism and it works pretty great. I usually have to edit what it gave me so it flows better or remove inaccurate language, but my emails sound so much better now! It’s also helped me put more personality into my resume and portfolio. So who knows, maybe it’ll help me get a better job?

Yeah, I’m really bad at structuring my writing and coming up with ways to phrase some things, especially when starting with a blank page. Having an existing base to work off of and edit helps me immensely.

It is sometimes good at building SQL code examples, but almost always needs fine-tuning since it doesn’t know the schema specifics.

Having said that one time it gave me code that resulted in an error, then I went back to GPT and said “This code you gave me is giving this error, can you fix it?” and all it would do is say something like “Correct, that code is wrong and will give an error.”

I just pass the create table statements after the instructions. It does pretty good up to 2 or 3 tables, but it will start to make mistakes when things get complicated

On the plus side, it’ll generate tedious code very well - double checking it is less draining than writing it yourself. Especially because I make more typos than it does - I often use it to get a starting point, then write the business logic myself

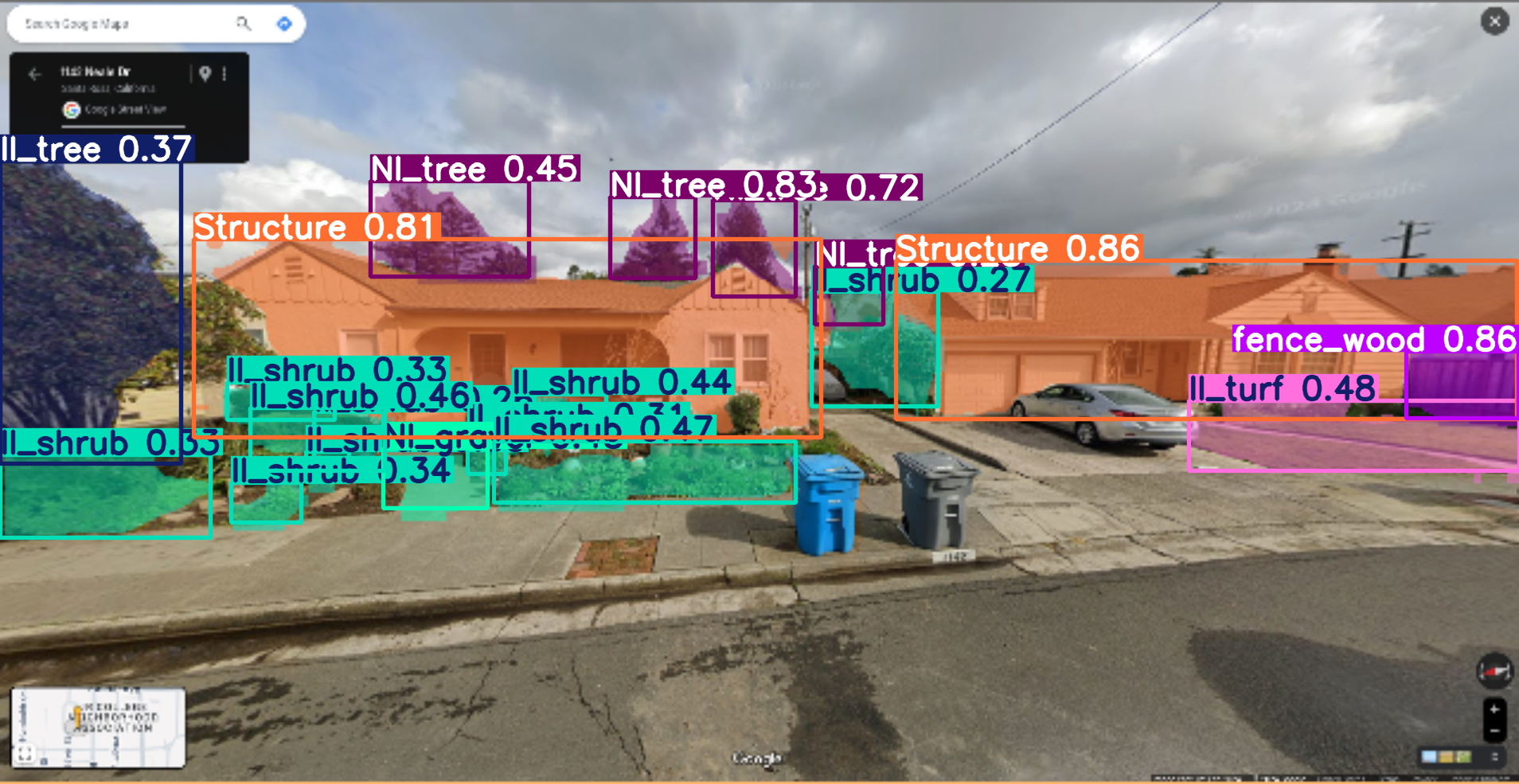

I’ve done several AI/ ML projects at nation/ state/ landscape scale. I work mostly on issues that can be solved or at least, goals that can be worked towards using computer vision questions, but I also do all kinds of other ml stuff.

So one example is a project I did for this group: https://www.swfwmd.state.fl.us/resources/data-maps

Southwest Florida water management district (aka “Swiftmud”). They had been doing manual updates to a land-cover/ land use map, and wanted something more consistent, automated, and faster. Several thousands of square miles under their management, and they needed annual updates regarding how land was being used/ what cover type or condition it was in. I developed a hybrid approach using random forest, super-pixels, and UNET’s to look for regions of likely change, and then to try and identify the “to” and “from” classes of change. I’m pretty sure my data products and methods are still in use largely as I developed them. I built those out right on the back of UNET’s becoming the backbone of modern image analysis (think early 2016), which is why we still had some RF in there (dating myself).

Another project I did was for State of California. I developed both the computer vision and statistical approaches for estimating outdoor water use for almost all residential properties in the state. These numbers I think are still in-use today (in-fact I know they are), and haven’t been updated since I developed them. That project was at a 1sq foot pixel resolution and was just about wall-to-wall mapping for the entire state, effectively putting down an estimate for every single scrap of turf grass in the state, and if California was going to allocate water budget for you or not. So if you got a nasty-gram from the water company about irrigation, my bad.

These days I work on a small team focused on identifying features relevant for wildfire risk. I’m trying to see if I can put together a short video of what I’m working on right now as i post this.

Example, fresh of the presses for some random house in California:

This is really cool, thanks for sharing.

I’ve learned more C/C++ programming from the GitHub Copilot plugin than I ever did in my entire 42 year life. I’m not a professional, though, just a hobbyist. I used to struggle through PHP and other languages back in the day but after a year of Copilot I’m now leveraging templates and the C++ STL with ease and feelin’ like a wizard.

Hell maybe I’ll even try Rust.

Any LLM I tried sucks using Rust. The book is great, you learn all of the essentials of Rust and it is also pretty easy to read.

I imagine that’s because Rust is still a relative newcomer to the industry and C/C++ have half a century of code out there.

Genuinely, nothing so far.

I’ve tinkered with it but I basically don’t trust it. For example I don’t trust it to summarise documents or articles accurately, every time I don’t trust it to perform a full and comprehensive search and I don’t trust it not to provide me false or inaccurate information.

LLMs have potential to be useful tools, but what’s been released is half baked and rushed to market as part of the current bubble.

Why would I use tools that inherently “hallucinate” - I. E. are error strewn? I don’t want to fact check the output of an LLM.

This is in many ways the same as not relying on Wikipedia for information. It’s a good quick summary but you have to take everything with a pinch of salt and go to primary sources. I’ve seen Wikipedia be wildly inaccurate about topics I know in depth, and I’ve seen AI do the same.

So pass until the quality goes up. I don’t see that happening in the near future as the focus seems to be monetisation, not fixing the broken products. Sure, I’ll tinker occasionally and see how it’s getting on but this stuff is basically not fit for purpose yet.

As the saying goes, all that glitters is not gold. AI is superficially impressive but once you scratch the surface and have to actually rely on it then it’s just not fit for purpose beyond a curio for me.

If you already kinda know programming and are learning a new language or framework it can be useful. You can ask it “Give me an if statement in Tcl” or whatever and it will spit something out you can paste in and see if it works.

But remember that AI are like the fae: Do not trust them, and do not eat anything offered to you.

Software developer here, who works for a tiny company of

27 employees and 2 owners.We use CoPilot in Visual Studio Professional and it’s saved us countless hours due to it learning from your code base. When you make a enterprise software there are a lot of standards and practices that have been honed over time; that means we write the same things over and over and over again, this is a massive time sink and this is where LLMs come in and can do the boring stuff for us so we can actually solve the novel problems that we are paid for. If I write a comment of what I’m about to do it will complete it.

For boiler plate stuff it’s mostly 100% correct, for other things it can be anywhere from 0-100% and even if not complete correct it takes less time to make a slight change than doing it all ourselves.

One of the owners is the smartest person I’ve ever met and also the lead engineer, if he can find it useful then it has its use cases.

We even have a tool based on AI that he built that watches our project. If I create a new model or add a field to a model, it will scaffold a lot of stuff, for instance the Schemas (Mutations and Queries), the Typescript layer that integrates with GraphQL, and basic views. This alone saves us about 45 minutes per model. Sure this could likely be achieved without an LLM, but it’s a useful tool and we have embraced it.

Software developer here, who works for a tiny company of 2 employees and 2 owners.

We use CoPilot

Sorry to hear about your codebase being leaked.

This isn’t something that happens when you’re paying for a premium subscription. Sure they could go against terms and conditions but that would mean lawsuits and such.

I switched to Linux a few weeks ago and i’m running a local LLM (which was stupidly easy to do compared to windows) which i ask for tips with regex, bash scripts, common tools to get my system running as i prefer, and translations/definitions. i don’t copy/paste code, but let it explain stuff step by step, consult the man pages for the recommended tools, and then write my own stuff.

i will extend this to coding in the future; i do have a bit of coding experience, but it’s mainly Pascal, which is horrendly outdated. At least i already have enough basic knowledge to know when the internal logic of what the LLM is spitting out is wrong.

Which local LLM do you use?

i’m currently using Alpaca with a few LLMs installed, but i really like llama2 uncensored, which is pretty fast and responsive on my system.

Llama 2 is really ancient now.

Try Qwen 2.5, whatever size fits on your system (probably 14B?). Its like night and day compared to llama2, and 34B/72B are like API model smart.

thanks for the recommendation, will try it out over the next few days :-)

I can link you to a good quantization, depending on your hardware!

And if you need long context (Qwen 2.5 is 32K, or potentially more), I can also point to the appropriate framework/settings.

OP seems to be talking about generative AI rather than AI broadly. Personally I have three main uses for it:

- It has effectively replaced google for me.

- Image generation enables me to create pictures I’ve always wanted to but never had the patience to practise.

- I find myself talking with it more than I talk with my friends. They don’t seem interested in anything I’m but chatGPT atleast pretends to be

Your third point reminded me of a kid recently who committed suicide and his only friend was an AI bot.

(commenting from alt account as lemm.ee is down again)

Don’t worry, I’m relatively satisfied with my life and have no desire in ending it. I’m just in the lonely chapter of my life where I’ve outgrown my old friend group but haven’t yet found the new ones. I don’t consider AI my friend. It’s just something to bounce my esoteric thoughts off.

AI isn’t useless, but it’s current forms are just rebranded algorithms with every company racing to get theirs out there. AI is a buzzword for tools that were never supposed to be labeled AI. Google has been doing summary excerpts for like a decade. People blindly trusted it and always said “Google told me”. I’d consider myself an expert on one particular car and can’t tell you how often those “answers” were straight up wrong or completely irrelevant to one type of car (hint, Lincoln LS does not have a blend door so heat problems can’t be caused by a faulty blend door).

You cite Google searches and summarization as it’s strong points. The problem is, if you don’t know anything about the topic or not enough, you’ll never know when it makes mistakes. When it comes to Wikipedia, journal articles, forum posts, or classes, mistakes are possible there too. However, those get reviewed as they inform by knowledgeable people. Your AI results don’t get that review. Your AI results are pretending to be master of the universe so their range of results is impossibly large. That then goes on to be taken is pure fact by a typical user. Sure, AI is a tool that can educate, but there’s enough it proves it gets wrong that I’d call it a net neutral change to our collective knowledge. Just because it gives an answer confidently doesn’t mean it’s correct. It has a knack for missing context from more opinionated sources and reports the exact opposite of what is true. Yes, it’s evolving, but keep in mind one of the meta tech companies put out an AI that recommended using Elmer’s glue to hold cheese to pizza and claimed cockroaches live in penises. ChatGPT had it’s halluconatory days too, it just got forgotten due to Bard’s flop and Cortana’s unwelcome presence.

Use the other two comments currently here as an example. Ask it to make some code for you. See if it runs. Do you know how to code? If not, you’ll have no idea if the code works correctly. You don’t know where it sourced it from, you don’t know what it was trying to do. If you can’t verify it yourself, how can you trust it to be accurate?

The biggest gripe for me is that it doesn’t understand what it’s looking at. It doesn’t understand anything. It regurgitates some pattern of words it saw a few times. It chops up your input and tries to match it to some other group of words. It bundles it up with some generic, human-friendly language and tricks the average user into believing it’s sentient. It’s not intelligent, just artificial.

So what’s the use? If it was specifically trained for certain tasks, it’d probably do fine. That’s what we really already had with algorithmic functions and machine learning via statistics, though, right? But sparsing the entire internet in a few seconds? Not a chance.

Edit: can’t beleive I there’d a their

I’ve been learning docker over the last few weeks and it’s been very helpful for writing and debugging docker-compose configs. My server how has 9 different services running on it.

I use it for python development sometimes, maybe once per day. I’ll paste in a chunk of code and describe how I want it altered or fixed and that usually goes pretty well. Or if I need a generic function that I know will have been coded a million times before I’ll just ask ChatGPT for it.

It’s far from “useless” and has made me somewhat more productive. I can’t see it replacing anyone’s job though, more of a supplemental tool that increases output.

I’ve definitely run into this as well in my own self-hosting journey. When you’re learning it’s easier to get it to just draft up a config - then learn what the options mean after the fact then it is to RTFM from the beginning.

Troublehsooting technology.

I’ve been using Linux as my daily driver for a year and a half and my learning is going a lot quicker thanks to AI. It’s so much easier to ask a question and get an answer instead of searching through stack overflow for 30 minutes.

That isn’t to say that the LLM never gives terrible advice. In fact, two weeks ago, I was digging through my logs for a potential intruder (false alarm) and the LLM gave me instructions that ended up deleting journal logs completely.

The good far outweighs the bad for sure tho.

The Linux community specifically has an anti-AI tilt that is embarrassing at times. LLMs are amazing, and much like random strangers on the internet, you don’t blindly trust/follow everything they say, and you’ll be just fine.

The best way I think of AI is that it’s going through a bubble not unlike the early days of the internet. There was a lot of overvalued companies and scams, but it still ushered in a new era.

Another analogy that comes to mind is how people didn’t trust wikipedia 20 years ago because anyone could edit it, and now it is one of the most trusted sources for information out there. AI will never be as ‘dumb’ as it is today, which is ironic because a lot of the perspective I see on AI was formed around free models from 2023.

I really hate AI as it is now only because of all the weird marketing people are doing for it; pretending they don’t know how it works like “omg it’s not supposed to do that idk why it’s doing that”. Anyone can see it’s potential though once they can see through all the SEO bullshit. Like you said, it’s in it’s infancy now and will take a long time to truly mature and it will be amazing when/if it does.

There is an inherent catch-22 in that the people who believe AI a fluke will rarely have the time/interest in realizing the vast improvements since the initial launch 2-3 years ago.

I am as frugal as they come, yet I shell out money for the paid version and have a reference point on its helpfulness that is grounded in experience. It is almost impossible trying to create a reference point to those that have no (recent) experience and refuse to get any.

It’s human nature/Dunning-Kreuger so I can’t be too frustrated I suppose.

I use it like an intern/other team member since the non-profit I work for doesn’t have any money to hire more people. Things like:

- Taking transcripts of meetings and turning them into neat and ordered meeting minutes/summaries, or pulling out any key actions/next steps

- Putting together objectives and agendas for meetings based on some loose info and ideas I give it

- Summarise the key points from articles/long documents I don’t have tome or patience to read through fully.

- Making my emails sound more professional/nicer/make up for my brainfarts

- Giving me ideas on how to format/word slides and documents depending on what tone I want to employ - is it meant for leadership? Other team members?

- Make my writing more organised/better structured/more professional sounding

- Writing emails in foreign languages with a professional tone. Caveat is I’m fluent enough in those languages to know if the output sounds right. Before AI I would rely on google translate (meh), dictionaries, language forums, etc and it would take me HOURS to write a simple email using the correct terminology. Also helpful to check grammar and sentence structure in ways that aren’t always picked up by Word.

- I sound more like a robot than an actual robot, so I ask the robot to reword my emails/messages to sound more “human” when the need arises (like a colleague is leaving, had a baby, etc).

- Bouncing off ideas. This doesn’t always work and I know it doesn’t actually have an opinion, but it helps get the ball rolling, especially if I’m struggling with procrastination.

- If my sentences are too long for a document, I ask it to shorten/reword and it’s pretty capable of doing that without losing too much of the essence of what I want to get across

Of course I don’t just take whatever it spits out and paste it. I read through everything, make sure it still sounds more or less like “me”. Sometimes it’ll take a couple of prompts to get it to go where I want it, and takes a bit of review and editing but it saves me literal hours. It’s not necessarily perfect, but it does the job. I get it’s not a panacea, and it’s not great for the environment, but this tech is literally saving my sanity right now.

I couldn’t let an AI do any of this for me.

As in… I couldn’t let anyone make my emails more professional or whatever.

It’s not like I think my emails are always the best and can not be improved upon, it’s just that my emails are “me”.

I never have cause to write an email in a foreign language.

To each their own ¯\(ツ)/¯

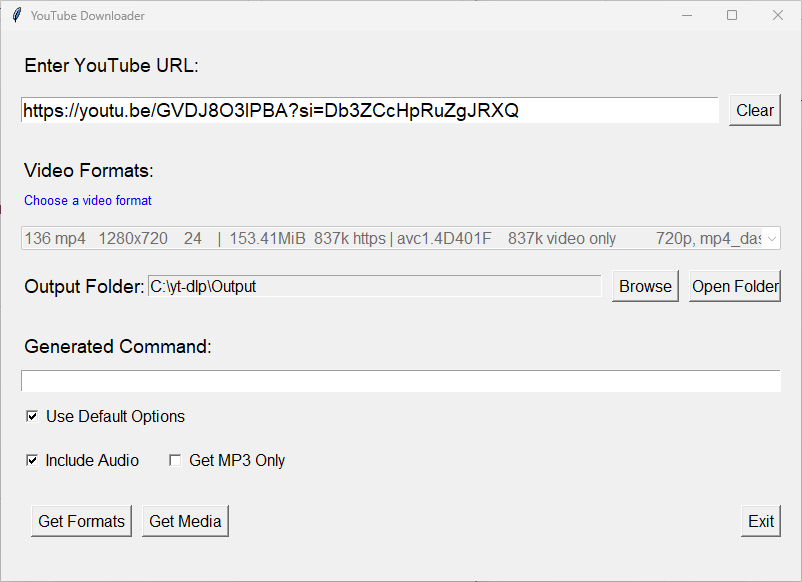

I used it to write a GUI frontend for yt-dlp in python so I can rip MP3s from YouTube videos in two clicks to listen to them on my phone while I’m running with no signal, instead of hand-crafting and running yt-dlp commands in CMD.

Also does HD video rips with audio encoding, if I want.

It took us about a day to make a fully polished product over 9 iterative versions.

It would have taken me a couple weeks to write it myself (and therefore I would not have done so, as I am supremely lazy)